AI in fintech is the use of predictive machine learning, generative AI, and increasingly agentic AI inside financial services products to detect fraud, score credit, automate KYC and AML, personalise experiences, and power customer-and-employee copilots. In 2026, it is shaped as much by regulation – the EU AI Act, MAS FEAT, APRA’s AI Safety Standard, and the NIST AI Risk Management Framework, as by model capability.

What “AI in Fintech” Means in 2026: Predictive ML, Generative AI, Agentic AI

- Predictive ML (supervised + unsupervised machine learning algorithms) – fraud scores, credit scores, churn, propensity. In production across virtually every fintech.

- Generative AI (LLMs and multimodal, built on natural language processing) – copilots, document AI for loan ops and KYC, summary generation, code assistants. Most fintechs are piloting this in 2025 – 26.

- Agentic AI (LLM + tool use + memory) – autonomous agents that read account data, call APIs, and act on the customer’s behalf. Experimental, ramping fast.

AI Fintech Use Cases: 8 High-Value Patterns Shipping in Production

1. Fraud detection and transaction monitoring

- Problem: real-time fraud at swipe / tap / API call without alert fatigue.

- Approach: gradient-boosted trees plus graph neural networks for anomaly detection on streaming features – AI fraud detection called from the payment path.

- Outcome: 30 – 50% reduction in false positives at constant catch rate (BCG, Fraud in Financial Services, 2024).

- Failure: model drift after a payment-rail or merchant-mix change; threshold retuning is a launch gate.

- Example: Stripe Radar, PayPal graph fraud (vendor disclosures, 2023–24).

2. Credit scoring and alternative-data underwriting

- Problem: approve thin-file applicants without raising default rates.

- Approach: AI credit scoring on transaction, telco, and payroll-API data; monotonic models for the score, LLM only for the explanation.

- Outcome: 10 – 25% approval-rate lift at constant default for thin-file segments (McKinsey, The next horizon for credit decisioning, 2024).

- Failure: proxy discrimination through correlated features (zip, device, merchant category), auditable under EU AI Act Annex III.

- Example: neobanks and BNPL providers using cash-flow underwriting; cite a public investor deck.

3. KYC, AML and sanctions screening

- Problem: onboard customers and clear alerts without ballooning ops cost – a textbook case for compliance process automation.

- Approach: document AI for ID extraction and identity verification, entity resolution for sanctions, LLM-assisted SAR drafting and regulatory reporting grounded on canonical evidence.

- Outcome: 40-70% reduction in manual review time per onboarding (LexisNexis, AML Cost of Compliance, 2024).

- Failure: hallucinated SAR narrative when the LLM is not grounded, regulators read SARs.

- Example: HSBC AML overhaul, Quantexa entity resolution (public case studies).

4. Customer-facing copilots and conversational banking

- Problem: deflect tier-1 contacts without breaching financial-advice rules.

- Approach: generative AI in fintech with RAG over customer data and product docs, tool calling for transactional intents, deterministic guardrails on advice and PII.

- Outcome: 30 – 50% tier-1 deflection with CSAT flat or up when grounded, measurable customer-experience optimization through AI chatbots and virtual assistants delivering personalized customer support; Klarna’s AI assistant reported workload equivalent to 700 FTE deflected (Klarna press, 2024).

- Failure: the virtual assistant offering unlicensed investment advice, or jailbreaks revealing PII.

- Example: Klarna’s customer assistant, Bunq’s GPT-powered Finn, Kasisto (banking conversational-AI platform).

5. Hyper-personalisation and next-best-action

- Problem: Raise engagement without nudging users into worse outcomes, hyper-personalized product recommendations grounded in real customer data analysis, not vibes.

- Approach: contextual bandits and propensity models over app events; LLM writes the copy, not the decision.

- Outcome: 5–15% lift in engagement metrics (Forrester, Personalisation in Financial Services, 2024).

- Failure: dark-pattern adjacency – nudging into high-fee products invites scrutiny under CFPB UDAAP and EU DSA.

- Example: challenger-bank “smart suggestions” feeds (cite vendor blog).

6. Robo-advisory and portfolio optimisation

- Problem: scale advice at lower cost-to-serve while keeping suitability and personalized investment strategies intact under market volatility.

- Approach: constrained optimisation plus reinforcement learning for rebalancing, market-trend prediction from sentiment analysis on news and filings, and dynamic-scenario simulation via market-prediction models for stress paths; LLM front-end for explanation.

- Outcome: 30–60% lower cost-to-serve vs human advisor at equivalent suitability (Deloitte, Robo-advisor economics, 2023).

- Failure: explanation hallucination – the number is right, the reason text is fabricated.

- Example: Wealthfront, Betterment, Singapore-domiciled StashAway as the regional reference.

7. Document intelligence (loan ops, claims, contracts)

- Problem: straight-through-process more files without silent extraction errors, another lever for compliance process automation in loan ops and claims.

- Approach: layout-aware OCR plus LLM extraction with per-field confidence scoring.

- Outcome: 50–80% reduction in STP drop-out for clean documents (vendor benchmarks, 2024).

- Failure: silent errors on edge cases – handwriting, low-DPI scans, multi-page contracts.

- Example: SME-lending fintechs using document AI; cite a vendor case study.

8. Internal copilots (engineering, support, compliance)

- Problem: unlock measured productivity and operational efficiency without a licence or leakage risk.

- Approach: code copilots, ticket summarisation, policy-search RAG, regulatory-change alerts, plus AI-enhanced RPA solutions wrapping legacy robotic process automation (RPA) bots in LLM judgement.

- Outcome: 15–30% productivity uplift on measured tasks (GitHub, Copilot productivity study, 2023).

- Failure: secret leakage if the index includes sensitive material; AI-generated code raises open-source licence questions.

- Example: Goldman Sachs internal LLM, Morgan Stanley’s GPT-4 for advisors.

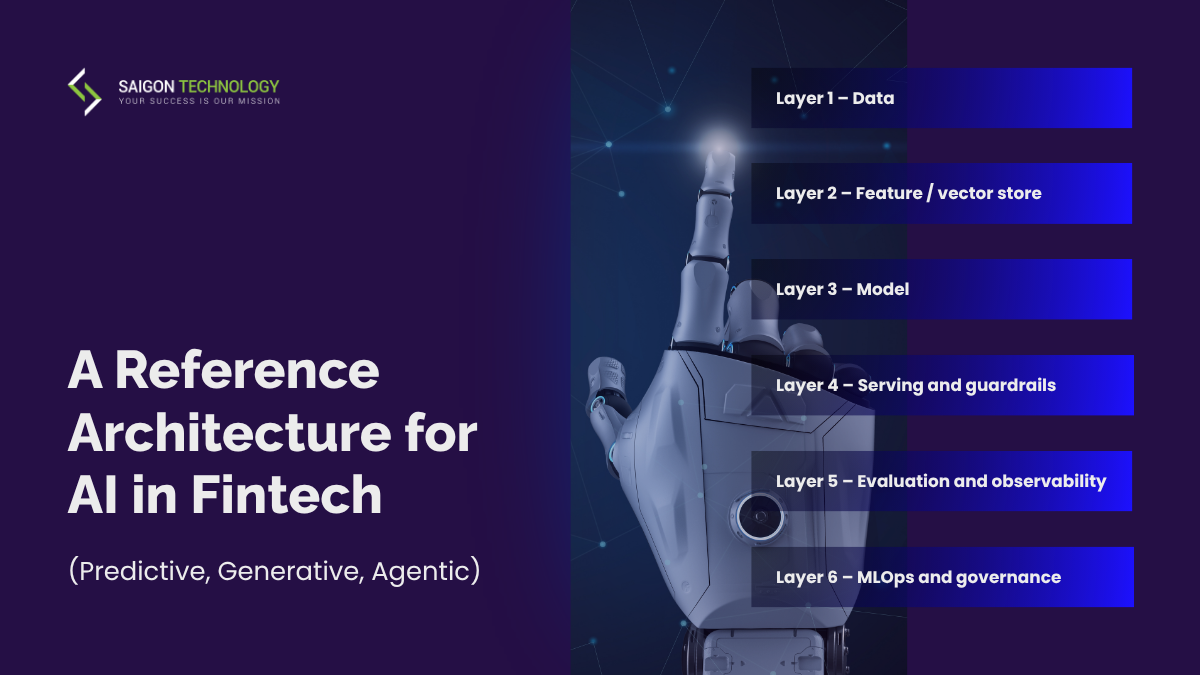

A Reference Architecture for AI in Fintech (Predictive, Generative, Agentic)

Layer 1 – Data

- Customer, transaction, and product data with consent flags and retention enforcement, usually consolidated through cloud data integration services.

- Lineage that survives an audit – required under EU AI Act Article 10 for high-risk systems.

- Failure: training data with PII bleeding into LLM prompts.

Layer 2 – Feature/vector store

- Online plus offline feature consistency for predictive ML; vector store for RAG-grounded GenAI.

- Failure: train-serve skew – a top-three production failure across financial-services ML (Google, Rules of ML).

Layer 3 – Model

- Mix of in-house predictive models, fine-tuned open-weight LLMs, and foundation-model APIs (common stacks: PyTorch and TensorFlow for advanced ML algorithms, LangChain for orchestration; open-source AI tools dominate the predictive-ML side).

- Build vs. buy per use case (see the table below).

- Failure: vendor lock-in through undocumented prompt-and-tool wiring.

Layer 4 – Serving and guardrails

- Latency SLAs, PII redaction, output filters, tool-call allow-listing for agentic flows, plus a zero-trust approach to tool calls and multi-factor authentication on agent-initiated transactions.

- Failure: jailbreaks bypassing weak system-prompt guardrails, back them with a deterministic policy layer.

Layer 5 – Evaluation and observability

- Offline eval harness + online A/B + shadow deployment; LLM-as-judge with human spot-checks; drift and cost monitoring.

- Failure: “We shipped it because the demo looked great”, no quantitative gate.

Layer 6 – MLOps and governance

- Model registry, approval workflows, model cards, and a change-management trail.

- Failure: a model retrained ad-hoc with no versioned pipeline – an instant audit finding under EU AI Act and NIST AI RMF.

AI in Fintech Across Markets: US, EU, Australia, Singapore

United States

- NIST AI Risk Management Framework (AI RMF 1.0 + Generative AI Profile) – voluntary but de facto baseline; expected by federal regulators and bank partners.

- CFPB Circular 2022-03 – adverse-action notices must explain the specific reasons for credit denial, even when the model is opaque.

- State-level laws and data protection laws – the Colorado AI Act (high-risk consumer AI, in force 2026), NYC Local Law 144 (bias audits – read across to AI-driven hiring and KYC tooling), and the evolving California SB 1047 debate.

- Federal financial regulators – the OCC, Federal Reserve, and FDIC apply SR 11-7 Model Risk Management to AI models the same way they do to traditional models.

European Union

- EU AI Act (in force August 2024, phased through 2026–27) – creditworthiness assessment is classified as high-risk AI in Annex III, triggering risk management, data governance, technical documentation, transparency, human oversight, and cybersecurity controls.

- GDPR Article 22 – the right not to be subject to solely automated decisions still applies and stacks on top.

- DORA (in force January 2025) – ICT third-party risk obligations apply to foundation-model API providers used in production paths.

- EBA and ECB – guidance on AI in credit-risk model use; supervisory scrutiny is rising.

Australia

- APRA Voluntary AI Safety Standard (2024) sets the tone; CPS 230 (operational risk, in force July 2025) covers material AI services as third-party arrangements; CPS 234 (information security) covers AI training data and inference paths.

- Privacy Act reforms (2024 tranche, more in 2026) – tighter automated-decision-making notices for consumers.

- AUSTRAC – AML/CTF reform 2026 implications for AI-based monitoring and SAR drafting.

Singapore

- MAS FEAT principles (Fairness, Ethics, Accountability, Transparency) – the operating frame for AI in financial services.

- MAS Veritas Toolkit – open-source FEAT assessment methodology, increasingly referenced in supervisory dialogue.

- MAS Information Paper on Generative AI risk (2024) – concrete control expectations for GenAI deployments in regulated firms.

- PDPA – personal-data protection, including AI training data and downstream inference logs.

7 Reasons Fintech AI Pilots Don’t Reach Production

- Poor data quality. Inconsistent customer master, broken lineage. Result: models look good in dev and fail in prod. Fix: lineage and contract tests on training inputs.

- No model risk management. No model card, no review board, no registry. Result: an SR 11-7 or EU AI Act audit finding. Fix: a lightweight MRM that scales with risk tier. Underlying causes are usually high implementation costs, ROI uncertainty, and a workforce skill gap rather than model capability.

- Hallucination in customer-facing copilots. The LLM gives unauthorised financial advice. Result: regulator complaint and brand damage. Fix: RAG grounding plus a deterministic policy layer plus LLM-as-judge gating.

- Explainability gap on high-risk decisions. A credit denial the team cannot explain. Result: CFPB Circular 2022-03 violation in the US, GDPR Article 22 plus EU AI Act high-risk obligations in Europe. Fix: monotonic or generalised additive models for the score; LLM only for narrative.

- Vendor lock-in on foundation-model APIs. Prompts and tool wiring depend on one vendor’s quirks. Result: a six-month rewrite when pricing or policy changes. Fix: an abstraction layer plus nightly cross-vendor evaluations.

- Evaluation debt. “Ship it because the demo looked great.” Result: silent regressions on every model swap. Fix: an offline eval harness, online shadow deployment, and drift monitoring as launch gates.

- Third-party AI risk. A vendor model touching the cardholder data environment with no contractual right of audit. Result: a DORA or APRA CPS 230 finding; the same vendor pathway is also the most likely cyberattack ingress. Fix: AI-specific addenda in vendor contracts and a documented exit plan.

Build vs Buy vs Partner: Choosing the Right AI Path for a Fintech Feature

|

Path

|

When it fits

|

Cost shape

|

Watch-outs

|

|

Foundation-model API (OpenAI, Anthropic, Google, AWS Bedrock)

|

Fast pilots, copilots, document AI, RAG-grounded answers; non-real-time, non-PII-only flows.

|

Variable per token; low fixed. Plan for 3–10× model price drops over 18 months.

|

Vendor lock-in; data residency (US, EU, AU, SG); rate limits; mid-deal policy changes.

|

|

Fine-tuned open-weight model (Llama, Mistral, Qwen)

|

Domain language, predictable workloads, on-prem / VPC requirements.

|

Higher fixed (GPU, MLOps); lower marginal.

|

MLOps discipline required; eval cost; fine-tuning alone does not solve hallucination.

|

|

Bespoke predictive model in-house

|

Credit, fraud, scoring – anything regulated as a high-risk decision.

|

Highest fixed; engineering plus risk team.

|

Model risk management overhead is real and compounds with every retrain.

|

|

External delivery partner or AI consultants

|

When the team needs MLOps maturity, regional regulation familiarity, or speed to a defensible pilot.

|

Project or dedicated-team economics.

|

Choose someone who has shipped AI in fintech, not just “AI.”

|

What to Look for in an AI-Fintech Delivery Partner

- Demonstrable fintech AI delivery – engineers and AI consultants with at least two production references and measurable outcomes.

- Engineers with both ML / data-engineering credentials and financial-services regulation literacy – not one or the other.

- MLOps maturity – model registry, model cards, eval harness, drift monitoring; sample artefacts shareable under NDA.

- Regional overlay familiarity – NIST AI RMF + CFPB (US), EU AI Act + DORA (EU), APRA + AU AI Safety Standard (AU), MAS FEAT + Veritas (SG).

- Clear stance on data handling – PII redaction, training-data consent, vendor data-residency selection.

- Build-vs-buy honesty – willing to recommend a foundation-model API or off-the-shelf when bespoke is overkill.

- Evaluation rigour – offline plus online plus LLM-as-judge plus human spot-checks, with reports retained per audit cadence.

- Delivery model that doesn’t blow your data scope wide open – dedicated team, segregated networks, no unmanaged BYOD on customer data.

FAQs

1. What is AI in fintech?

2. What are the top AI fintech use cases in 2026?

3. Is AI taking over fintech?

4. What is the difference between predictive ML, generative AI, and agentic AI in fintech?

5. Does the EU AI Act apply to my fintech?

6. How do MAS FEAT and the Veritas toolkit shape AI deployment in Singapore?

7. What does APRA expect from Australian fintechs using AI?

8. Should fintechs build their own AI model or use foundation-model APIs?

9. Is generative AI safe for customer-facing banking apps?

10. How much does it cost to build an AI feature in a fintech app?

Ship AI in Fintech as Product, Not as Demo

- PCI DSS for fintech engineering teams – where AI training data touches cardholder data.